Codex Swarm — DAG-Optimized Parallel Codex CLI Orchestrator

Executive Summary

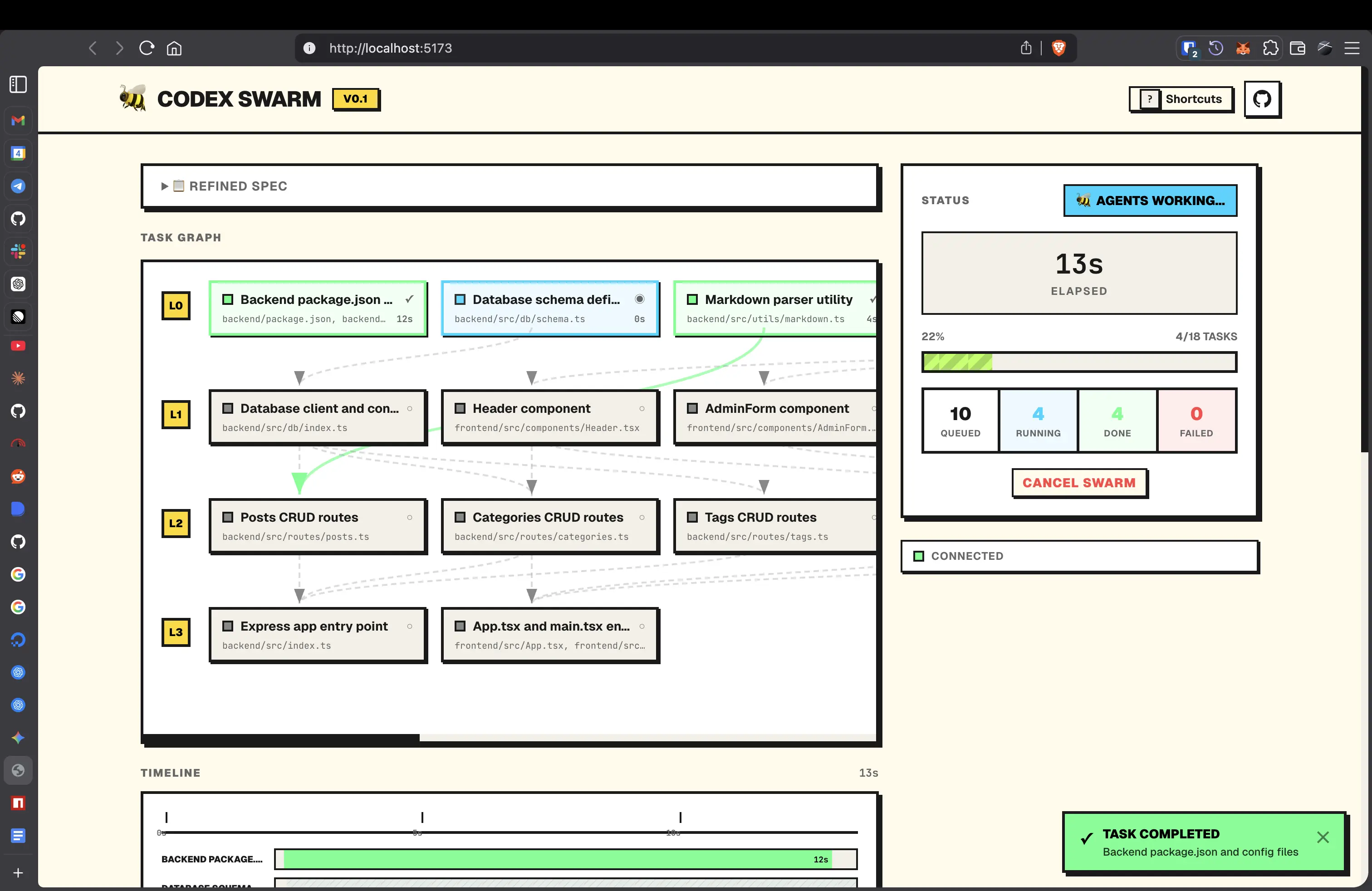

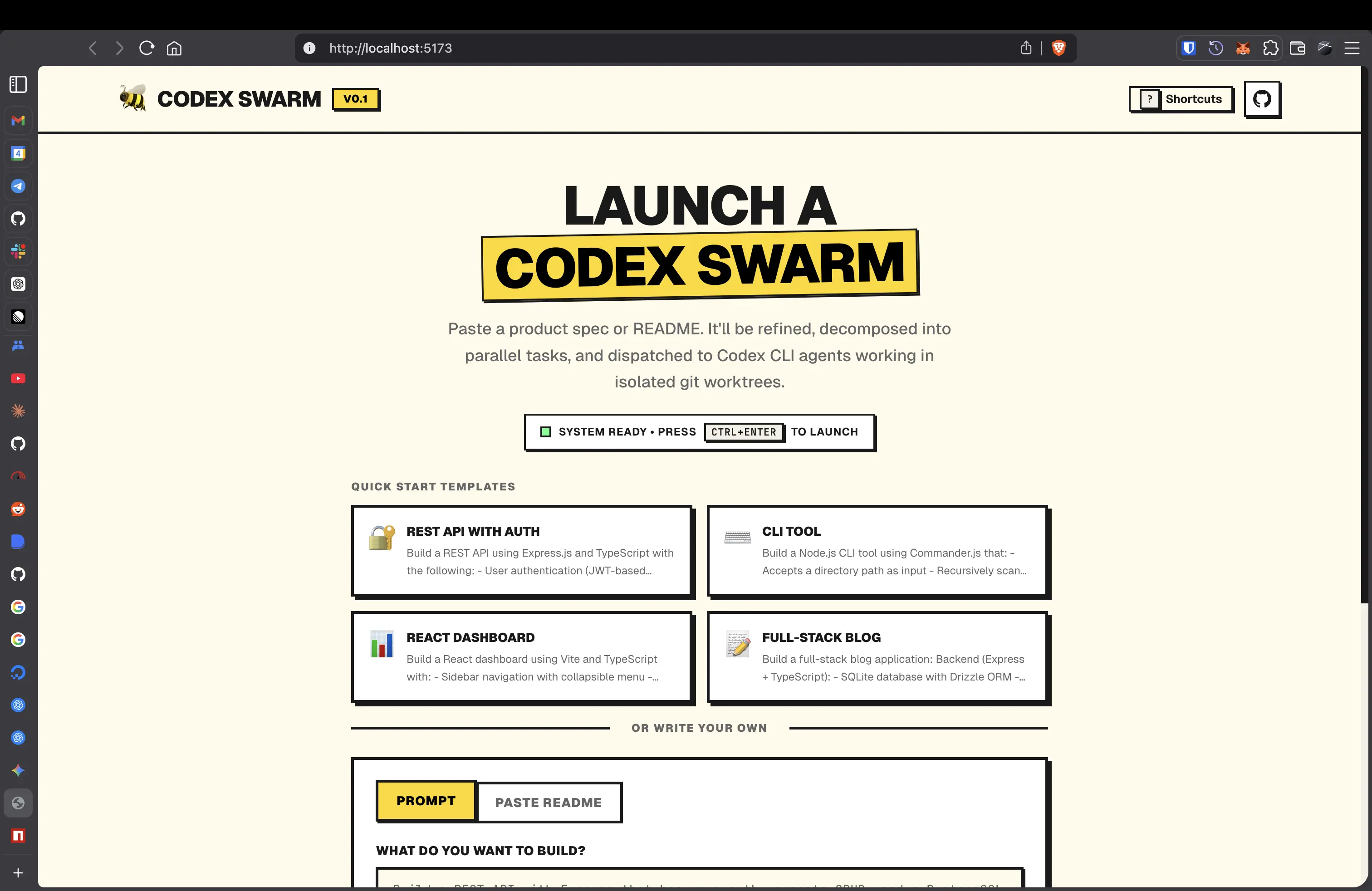

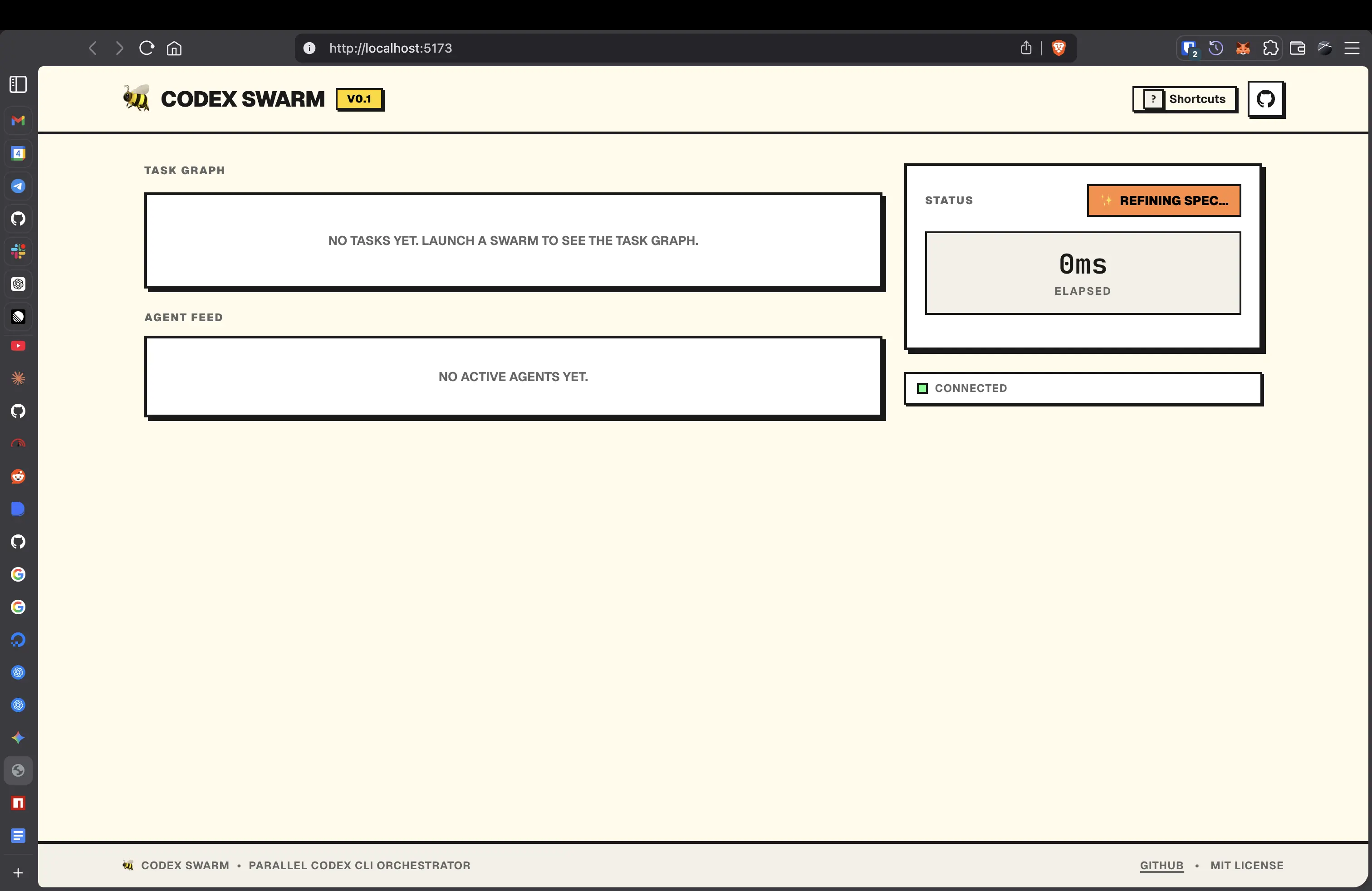

Codex Swarm transforms a single natural-language product specification into a dependency-aware Directed Acyclic Graph (DAG) of coding tasks, then dispatches them to parallel Codex CLI agents running inside Docker-sandboxed containers. A real-time neobrutalist dashboard renders the entire execution pipeline live — from spec refinement through task completion.

The core innovation is a frontier-based DAG scheduler that maximizes Codex CLI throughput by distinguishing between blocking and non-blocking tasks, ensuring maximum parallelization while respecting true data dependencies between tasks.

Problem Statement

Today's Codex CLI workflow is fundamentally sequential and manual:

- A developer writes a prompt, fires a single Codex agent, and waits.

- Once it finishes, they manually write the next prompt for the next piece of work.

- Tasks that could run concurrently are serialized — wasting time and API capacity.

- There's no dependency tracking, no conflict avoidance, and no orchestration layer.

Codex Swarm eliminates this bottleneck entirely. What used to take an afternoon of copy-pasting prompts completes in minutes of autonomous, parallel execution.

Core Innovation: DAG-Based Task Scheduling

The Problem with Flat Task Lists

Most LLM orchestration tools treat tasks as a flat queue — execute one, then the next. Even "parallel" systems often run everything at once without understanding that Task C depends on Task A's output while Task B is completely independent.

Codex Swarm's Approach

Codex Swarm models task execution as a Directed Acyclic Graph where:

- Nodes represent discrete, file-scoped coding tasks

- Edges represent hard data dependencies (e.g., a service that imports a model must wait for the model to be created)

- Independent branches in the graph execute simultaneously

This is not just a topological sort executed once. The orchestrator uses a frontier-based incremental scheduler that re-evaluates the ready set after every task completion:

┌──────────┐

│ Spec │

└────┬─────┘

│ LLM Decomposition

┌────▼─────┐

┌─────┤ Task DAG ├─────┐

│ └──────────┘ │

┌────▼───┐ ┌─────▼───┐

│ Task A │ │ Task B │ ← Independent: run in parallel

│(models)│ │ (utils) │

└────┬───┘ └─────┬───┘

│ │

┌────▼───┐ ┌─────▼───┐

│ Task C │ │ Task D │ ← Blocked until A/B complete

│ (API) │ │ (CLI) │

└────┬───┘ └─────────┘

│

┌────▼───┐

│ Task E │ ← Cascades only after C finishes

│(tests) │

└────────┘

Blocking vs. Non-Blocking: Maximizing Utilization

The DAG structure inherently classifies tasks:

| Type | Definition | Scheduling Behavior |

|---|---|---|

| Non-blocking | Tasks with zero unmet dependencies | Dispatched immediately, up to maxConcurrency slots |

| Blocking | Tasks with unmet dependencies | Held in queued state until all prerequisites reach completed |

The scheduler runs on every state transition — not on a timer. When Task A completes, the scheduler instantly evaluates which blocked tasks are now unblocked and fills available concurrency slots. This event-driven approach eliminates idle time between waves.

Key implementation detail (from orchestrator.ts):

// Frontier-based scheduling — runs after EVERY task state change

private scheduleReady(): void {

const ready = [...this.tasks.values()].filter((t) => {

const state = this.taskStates.get(t.id)!;

if (state.status !== 'queued') return false;

// A task is ready only when ALL its dependencies are completed

return t.dependencies.every(

(dep) => this.taskStates.get(dep)?.status === 'completed'

);

});

const slots = this.maxConcurrency - this.runningCount;

const toStart = ready.slice(0, slots);

for (const task of toStart) {

this.dispatch(task);

}

}

This is called in the finally block of every task dispatch — meaning the moment a slot opens, it's filled. No polling delay, no batch boundaries.

Cascading Failure Propagation

When a task fails, the orchestrator doesn't leave its dependents in a permanent queued state. It proactively fails downstream tasks with a clear causal message:

private failDependents(parentId: string): void {

for (const [id, task] of this.tasks) {

if (task.dependencies.includes(parentId)) {

const state = this.taskStates.get(id)!;

if (state.status === 'queued') {

state.status = 'failed';

state.error = `Dependency "${parentId}" failed`;

// ... emit update

this.failDependents(id); // Recursive propagation

}

}

}

}

This recursive propagation gives the user immediate feedback about the blast radius of a failure and enables targeted retries. Retrying a parent task automatically resets its failed dependents to queued.

Architecture

┌──────────────────────────────────────────────────────────────────┐

│ Svelte 5 Dashboard │

│ DAG Visualization │ Agent Feed │ Timeline │ Diff Viewer │

│ (WebSocket — real-time bidirectional) │

└────────────────────────────────┬─────────────────────────────────┘

│

┌────────────────────────────────▼─────────────────────────────────┐

│ Hono HTTP/WS Server │

│ ┌─────────────┐ ┌───────────────┐ ┌────────────────────┐ │

│ │ Spec │ │ Decomposer │ │ Orchestrator │ │

│ │ Refiner │ │ (DAG Gen) │ │ (Frontier Sched) │ │

│ │ (o3-mini) │ │ (o3-mini) │ │ │ │

│ └──────┬──────┘ └──────┬────────┘ └────────┬───────────┘ │

│ │ │ │ │

│ ▼ ▼ ▼ │

│ Refined Spec → Task DAG JSON → Parallel Dispatch │

└────────────────────────────────┬─────────────────────────────────┘

│

┌──────────────────┼──────────────────┐

│ │ │

┌────────▼────────┐ ┌──────▼───────┐ ┌────────▼────────┐

│ Docker Container│ │Docker Contner│ │Docker Container │

│ (4GB / 2 CPU) │ │ (4GB / 2 CPU)│ │ (4GB / 2 CPU) │

│ Sandbox Copy │ │ Sandbox Copy │ │ Sandbox Copy │

│ of Repo │ │ of Repo │ │ of Repo │

└─────────────────┘ └──────────────┘ └─────────────────┘

Component Breakdown

| Component | Tech | Role |

|---|---|---|

| Spec Refiner | OpenAI Chat API (o3-mini) | Converts rough user input into a precise engineering brief |

| Decomposer | OpenAI Chat API (o3-mini, JSON mode) | Generates file-scoped task DAG with explicit dependency edges |

| Orchestrator | TypeScript, EventEmitter | Frontier-based scheduler, concurrency control, lifecycle management |

| Agent Planner | OpenAI Chat API (o3-mini, JSON mode) | Generates concrete write_file and shell action plans per task |

| Sandbox Runner | Docker, child_process | Isolated container execution, diff capture, change application |

| Dashboard | Svelte 5 (Runes), Tailwind CSS, WebSocket | Real-time DAG visualization, agent logs, diff viewer |

Docker Sandboxing: Security Through Isolation

Each Codex agent runs inside a resource-constrained Docker container — not directly on the host. This is a critical security boundary: LLM-generated code is untrusted by default.

Sandbox Lifecycle

1. createSandboxCopy() → Full repo copy to /tmp path (no shared state)

2. docker run → Container with repo mounted at /workspace

--memory 4g --cpus 2 (resource caps)

3. docker exec → LLM-planned actions executed sequentially

4. getSandboxDiff() → git diff captured for review

5. applySandboxChanges()→ Only changed files copied back to original repo

6. docker kill + rm → Container destroyed, sandbox deleted

Why This Matters

| Threat | Mitigation |

|---|---|

| Malicious code execution | Runs inside container with resource limits, not on host |

| File system escape | Sandbox is a full copy — original repo untouched until explicit apply step |

| Resource exhaustion | --memory 4g --cpus 2 hard caps per container |

| Cross-task interference | Each task gets its own sandbox copy and container — complete isolation |

| Supply chain attacks | Container image is built from a controlled Dockerfile.agent with minimal tooling |

The Docker image (Dockerfile.agent) is deliberately minimal: Node 22, git, curl, build-essential, and Python 3 — nothing more.

Diff-Before-Apply Pattern

Changes aren't blindly merged. The orchestrator:

- Captures a

git diff --cachedfrom the sandbox - Streams the diff to the dashboard for visual review

- Copies only the files listed in

git diff --cached --name-onlyback to the original repo

This means the user sees exactly what changed before it lands.

LLM-Driven Agent Architecture

Unlike systems that shell out to codex --full-auto and hope for the best, Codex Swarm uses a two-phase LLM architecture:

Phase 1: Server-Side Planning (OpenAI SDK)

The planTask() function (agent.ts) calls the LLM with:

- The task description and target files

- The current project file tree for context

- A structured system prompt requesting a JSON action plan

The LLM responds with concrete actions:

{

"actions": [

{ "type": "shell", "command": "mkdir -p /workspace/src/models" },

{ "type": "write_file", "path": "/workspace/src/models/user.ts", "content": "..." },

{ "type": "shell", "command": "cd /workspace && npm install zod" }

]

}

Phase 2: Docker-Side Execution

Each action is executed sequentially inside the container via docker exec. The separation ensures:

- The LLM never has direct host access — it only produces a plan

- The execution environment is sandboxed — the plan runs inside Docker

- Partial failures don't abort — a failed

npm installdoesn't prevent subsequent file writes

Output from every action is streamed in real-time to the dashboard via WebSocket.

Real-Time Dashboard: Neobrutalist Design

The dashboard is built with Svelte 5 Runes and a custom neobrutalist design system — thick borders, hard shadows, no border radius, high-contrast accent colors, and bold typography.

Why Neobrutalism for a Developer Tool?

Neobrutalism solves a specific problem: information density without visual ambiguity. When monitoring 4+ parallel agents:

- Zero border-radius → Hard geometric edges make panel boundaries unambiguous

- 3px solid borders → Panels are visually distinct even at a glance

- Status colors (cyan/green/red/yellow) → Instant task state recognition without reading labels

- Offset box shadows → Depth hierarchy is immediately clear, no subtle gradients to decode

- Monospace fonts for data → Diffs, logs, and output align perfectly

CSS Architecture

The design system (app.css) defines reusable neobrutalist primitives:

.nb-panel {

border: 3px solid var(--border);

box-shadow: 4px 4px 0px var(--border);

background-color: var(--card);

}

.nb-shadow-hover:hover {

box-shadow: 6px 6px 0px var(--border);

transform: translate(-2px, -2px);

}

.nb-shadow-hover:active {

box-shadow: 1px 1px 0px var(--border);

transform: translate(3px, 3px);

}

Both light (#FFFBEB warm cream) and dark (#1a1a2e deep navy) themes are fully implemented with matching accent colors.

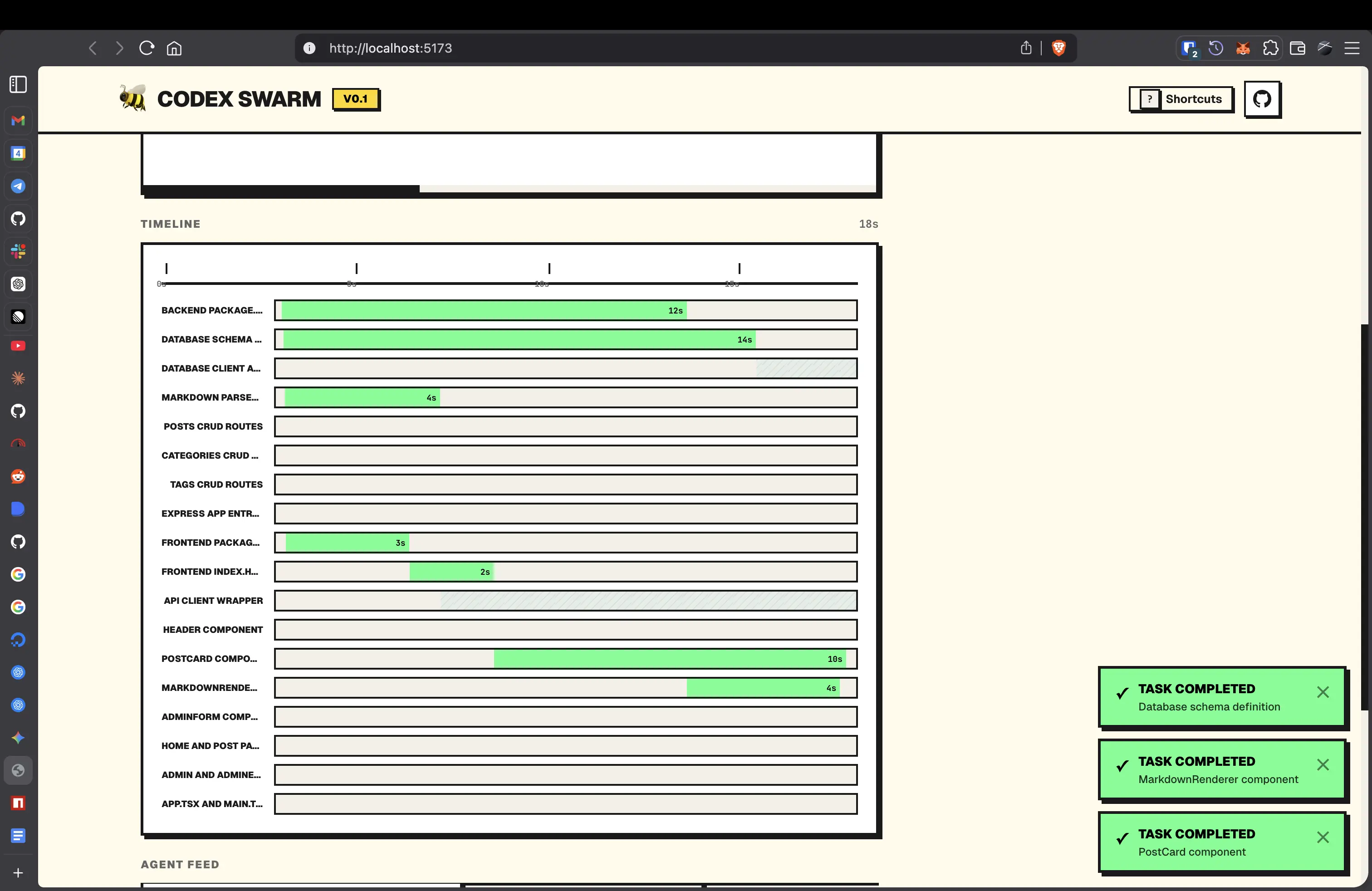

Dashboard Panels

| Panel | Purpose | Update Frequency |

|---|---|---|

| DAG Graph | SVG-rendered task nodes with dependency edges, color-coded by status | Every task_update event |

| Agent Feed | Streaming terminal output from each container, per-task filterable | Every agent_output chunk |

| Timeline | Gantt-style horizontal bars showing task start/end times and overlap | Every status transition |

| Diff Viewer | Syntax-highlighted unified diff for each completed task | On task completion |

| Summary | Live counters — running, queued, completed, failed, elapsed time | Derived reactively |

| Export Panel | Download full run data for post-mortem analysis | On demand |

Reactive State Management

The store (swarm.svelte.ts) uses Svelte 5's $state and $derived runes for zero-boilerplate reactivity:

// All derived values recompute automatically on any task state change

get running() { return this.tasks.filter(t => t.status === 'running').length; }

get queued() { return this.tasks.filter(t => t.status === 'queued').length; }

End-to-End Pipeline

User Input → Refine → Decompose → Schedule → Execute → Merge → Visualize

│ │ │ │ │ │ │

│ raw spec │ o3-mini │ o3-mini │ frontier │ Docker │ file │ WebSocket

│ │ chat API │ JSON mode │ scheduler │ sandbox │ copy │ push

│ │ │ │ │ │ │

▼ ▼ ▼ ▼ ▼ ▼ ▼

"Build a Clear engg Task DAG Ready set Isolated Changed DAG lights

REST API brief with with edges computed, containers files up green

with auth" file paths & deps slots filled per task applied in real-time

Detailed Flow

- Spec Refinement — Raw user text →

o3-mini→ structured engineering brief with file paths, tech stack, and data shapes. - Repository Context —

git ls-filescaptures the current project structure, providing the decomposer with awareness of existing code. - DAG Generation — Refined spec + file tree →

o3-mini(JSON mode) → validated task graph. Dependencies are verified (all referenced IDs must exist). - Frontier Scheduling — Tasks with zero unmet deps are dispatched immediately up to

maxConcurrency. Each completion triggers a re-scan. - Sandboxed Execution — Full repo copy → Docker container → LLM-planned actions →

write_fileandshellcommands executed in sequence. - Diff Capture —

git diff --cachedfrom sandbox, streamed to dashboard. - Change Application — Only modified files are copied back to the original repo.

- Cascading Unlock — Completed task → dependents re-evaluated → next wave dispatched.

- Completion — When all tasks are

completedorfailed,swarm_completeis emitted with total time and success/failure counts.

WebSocket Protocol

All communication between server and dashboard uses a typed WebSocket protocol defined in types.ts:

| Direction | Message Type | Payload | When |

|---|---|---|---|

| Client → Server | start | { spec, repoPath, maxConcurrency } | User clicks "Launch Swarm" |

| Client → Server | cancel | — | User cancels the run |

| Client → Server | retry | { taskId } | User retries a failed task |

| Server → Client | refining | { spec } | Spec refinement begins |

| Server → Client | refined | { spec } | Refined spec ready |

| Server → Client | dag | { tasks[] } | DAG generated |

| Server → Client | task_update | { taskId, status, error?, timestamps } | Any task state change |

| Server → Client | agent_output | { taskId, chunk } | Streaming agent output |

| Server → Client | task_diff | { taskId, diff } | Task diff captured |

| Server → Client | swarm_complete | { totalTime, succeeded, failed } | All tasks finished |

Configuration

All configuration is centralized in config.ts with environment variable validation:

| Variable | Default | Purpose |

|---|---|---|

OPENAI_API_KEY | required | API key for OpenAI or compatible provider |

OPENAI_BASE_URL | api.openai.com/v1 | Swap to Ollama, Groq, Together AI, etc. |

DECOMPOSE_MODEL | o3-mini | Model for DAG generation |

REFINE_MODEL | o3-mini | Model for spec refinement |

AGENT_MODEL | o3-mini | Model for agent action planning |

MAX_CONCURRENCY | 4 | Max parallel Docker containers |

AGENT_TIMEOUT_MS | 600000 | Per-task timeout (10 min) |

The OPENAI_BASE_URL support means Codex Swarm works with any OpenAI-compatible API — local models via Ollama, cloud providers like Groq or Together AI, or Azure OpenAI deployments.

Tech Stack

| Layer | Technology | Rationale |

|---|---|---|

| Runtime | Node.js 22, TypeScript 5 | Native ESM, top-level await, modern APIs |

| HTTP/WS Server | Hono | Lightweight, edge-compatible, built-in WebSocket upgrade |

| Frontend | Svelte 5 (Runes) | Fine-grained reactivity without virtual DOM overhead |

| Styling | Tailwind CSS 4 + shadcn-svelte | Utility-first with accessible component primitives |

| Monorepo | pnpm workspaces | Hoisted dependencies, fast installs |

| Sandboxing | Docker | Process isolation, resource limits, reproducible environments |

| LLM | OpenAI Chat Completions API | JSON mode for structured output, streaming for real-time feedback |

What Makes Codex Swarm Unique

| Capability | Codex Swarm | Typical LLM Orchestrators |

|---|---|---|

| Task modeling | DAG with typed dependency edges | Flat lists or simple chains |

| Scheduling | Event-driven frontier scheduler | Timer-based polling or sequential |

| Parallelism | True concurrent execution bounded by maxConcurrency | Often single-threaded or fire-all |

| Isolation | Docker containers with resource caps per task | Shared process, shared filesystem |

| Conflict avoidance | File-scoped tasks + sandbox copies | Hope for the best |

| Failure handling | Recursive cascading failure + targeted retry | Retry-all or abandon |

| Visibility | Real-time DAG + streaming logs + live diffs | Post-hoc log files |

| LLM integration | Plan-then-execute (LLM plans, Docker executes) | LLM has direct system access |

| Provider flexibility | Any OpenAI-compatible API via OPENAI_BASE_URL | Locked to single provider |

Repository Structure

codex-swarm/

├── packages/

│ ├── server/ # Orchestration backend

│ │ ├── src/

│ │ │ ├── index.ts # Hono server, WebSocket routing

│ │ │ ├── orchestrator.ts # DAG scheduler, task lifecycle

│ │ │ ├── decomposer.ts # LLM-powered spec→DAG decomposition

│ │ │ ├── agent.ts # LLM action planner (write_file/shell)

│ │ │ ├── sandbox-runner.ts# Docker container management

│ │ │ ├── config.ts # Centralized env config

│ │ │ └── types.ts # Shared type definitions

│ │ └── docker/

│ │ └── Dockerfile.agent # Minimal agent container image

│ └── web/ # Dashboard frontend

│ └── src/

│ ├── app.css # Neobrutalist design system

│ ├── lib/

│ │ ├── stores/

│ │ │ └── swarm.svelte.ts # Reactive state (Svelte 5 Runes)

│ │ └── components/

│ │ ├── TaskGraph.svelte # DAG visualization (SVG)

│ │ ├── AgentFeed.svelte # Streaming agent output

│ │ ├── Timeline.svelte # Gantt-style execution timeline

│ │ ├── DiffViewer.svelte # Syntax-highlighted diffs

│ │ ├── Summary.svelte # Live progress counters

│ │ └── ExportPanel.svelte# Run data export

│ └── routes/

│ └── +page.svelte # Main dashboard page

└── docs/

└── END_TO_END_WORKFLOW.md # Step-by-step runtime walkthrough

License

MIT — see LICENSE.